The Gaps That Slip Through MA Due Diligence

By Sophia Martinez

December 30, 2025

M&A due diligence rarely fails because teams didn’t look closely enough. More often, it fails because they looked closely at the wrong level. Policies are reviewed, architectures are summarized and checklists are completed. By the end of diligence, there’s usually a strong sense that the target organization is “generally compliant” and “integration-ready.” And yet, post-close reality often tells a different story.

The Scenario

For this example, Riftur was used to support a hypothetical M&A technical due diligence review using two documents that would be familiar to almost any deal team. On the acquiring side was a structured technical due diligence checklist outlining integration, security, compliance, and operational expectations. On the target side was an internal technical overview describing architecture, data handling, security controls, and development practices. Neither document was unusual. The checklist reflected what many buyers already use to standardize diligence across deals. The technical overview reflected how many companies explain their systems internally as high-level, narrative-driven, and written for broad audiences rather than auditors. The purpose of the exercise was not to determine whether the target company was “good” or “bad,” modern or outdated. It was to understand whether the claims made in the technical overview actually satisfied the expectations laid out in the diligence checklist, and whether any misalignment would introduce integration or compliance risk post-close. This setup generalizes cleanly across industries. The same pattern appears whether the documents are IT checklists, HR policies, licensing requirements, financial controls, or regulatory obligations. The core question is always the same: do these documents actually line up when compared directly?

What the Analysis Was Trying to Answer

Rather than producing a binary pass/fail result, the analysis was designed to answer a more operational question: if the acquiring company were to integrate this system immediately after close, where would assumptions break down? To do that, checklist requirements were decomposed into auditable control statements. Broad requirements like “encryption,” “retention,” or “CI/CD security” were broken down into specific mechanisms: standards, scopes, enforcement points, and evidence types. Each of those statements was then mapped against explicit language in the technical overview. Importantly, coverage was only marked when the required mechanism was clearly and explicitly stated. General claims or implied practices were not treated as sufficient. If a requirement was mentioned but missing key attributes, it was marked as partially covered. If it was absent or too vague to verify, it was treated as a gap. This approach mirrors how diligence actually fails in practice. Most issues don’t arise because a topic was never mentioned, but because it was mentioned in a way that left room for interpretation.

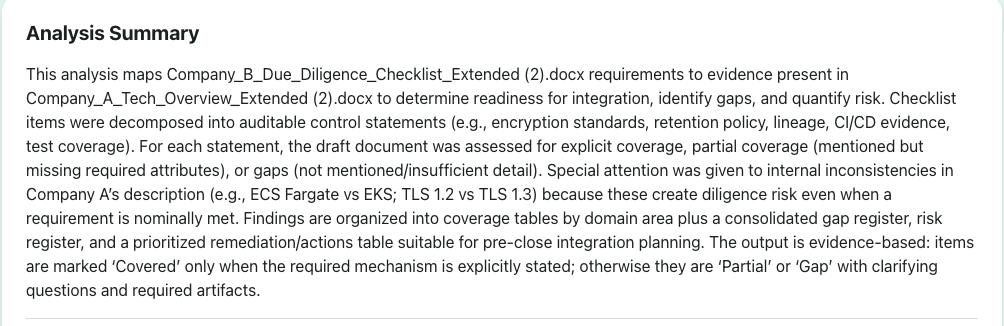

Analysis Summary

Where Confidence Starts to Erode

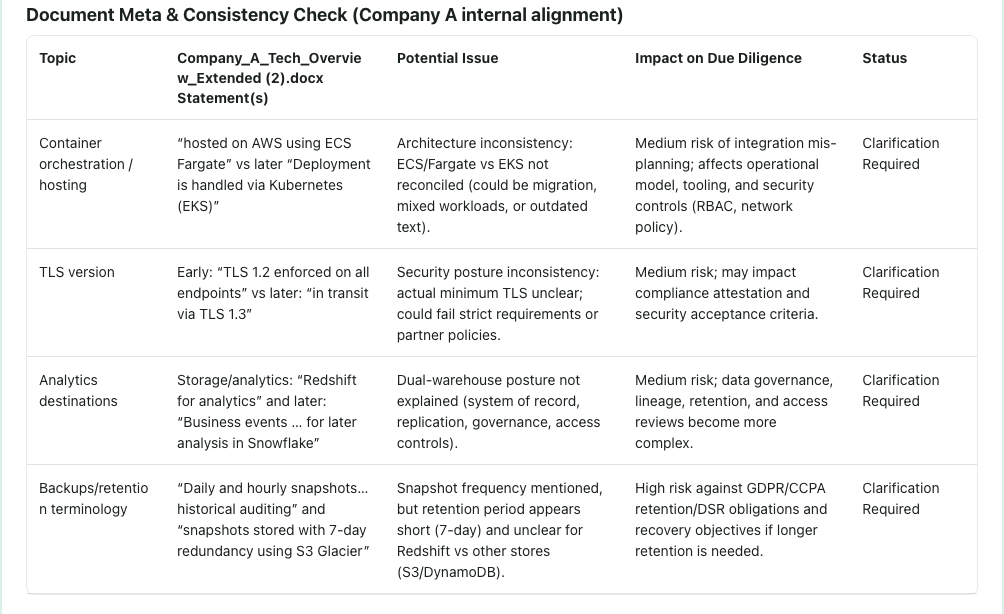

At first glance, the target company’s technical overview appeared strong. It described a cloud-native architecture, referenced modern tooling, and included language around security, compliance, and scalability. In isolation, most reviewers would be comfortable with it. The first signs of trouble appeared when the document was evaluated for internal consistency. Different sections described different container orchestration platforms. Encryption standards shifted between versions. Analytics destinations were described without clearly defining systems of record or governance boundaries. Backup and retention language referenced snapshots without specifying retention periods or regulatory alignment. None of these issues would necessarily trigger concern during a standalone review. However, in a diligence context, inconsistencies like these matter because they undermine confidence in what is actually deployed versus what is described. When internal documents disagree with themselves, it becomes difficult for an acquiring team to assess readiness, risk, or remediation effort accurately. This is often where diligence conversations become uncomfortable not because something is obviously wrong, but because certainty begins to erode.

Document Meta & Consistency Check

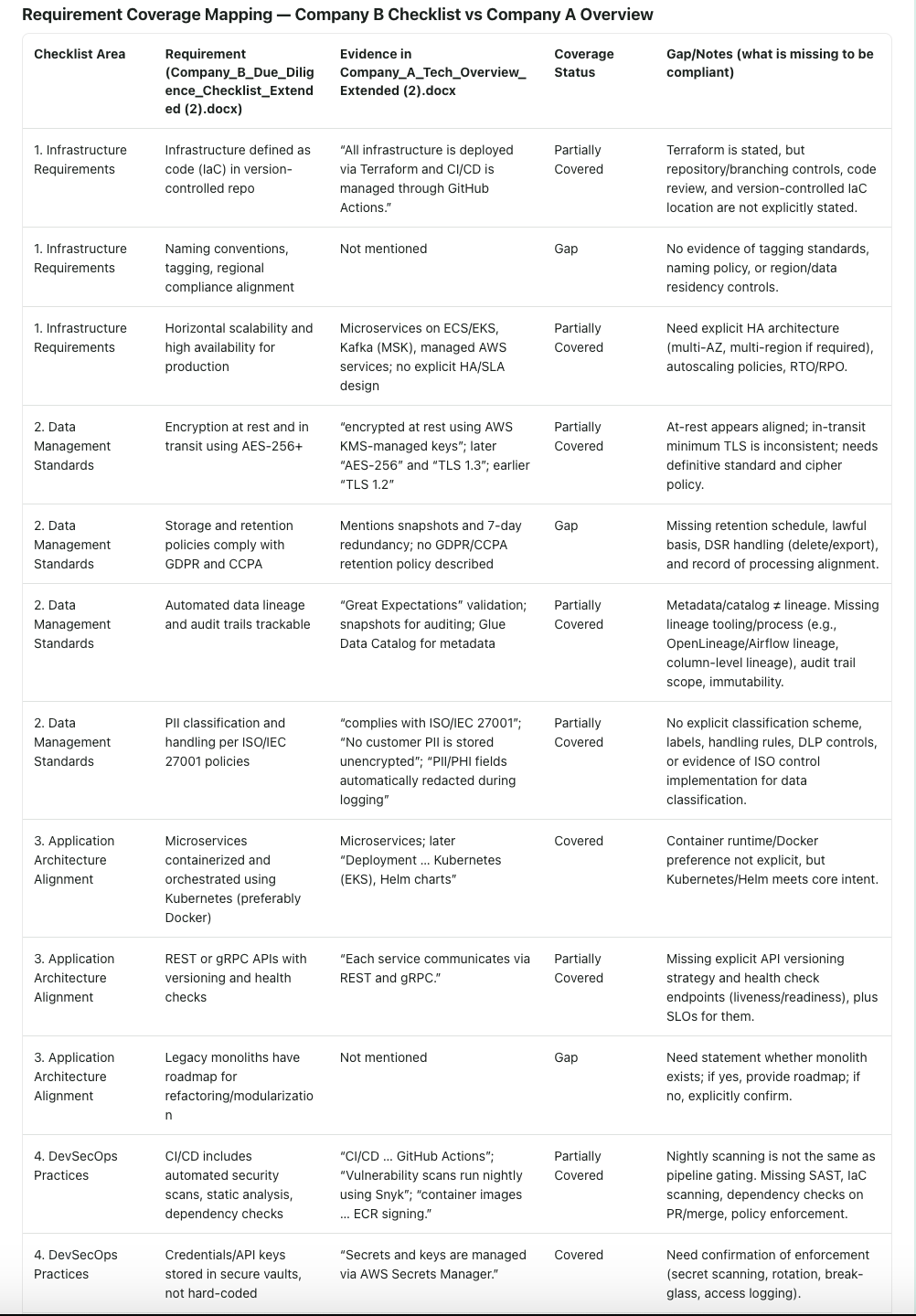

Mapping Requirements to Reality

When the acquiring company’s checklist was applied directly to the technical overview, the nature of the gaps became clearer. Many requirements landed in the “partially covered” category. Infrastructure was described as managed through infrastructure-as-code, but details about repository controls, branching strategy, and environment separation were not explicitly stated. Encryption was referenced, but minimum standards and exception handling were unclear. CI/CD pipelines were mentioned, but enforcement points and security gating mechanisms were not described. A smaller but important set of requirements were not addressed at all. Data retention schedules, GDPR/CCPA deletion workflows, lineage tooling, regression testing requirements, and API versioning policies were either missing or too ambiguous to verify. What emerged was not a picture of negligence, but of narrative documentation that was never designed to be evaluated against a formal checklist. That mismatch is common and it’s exactly where diligence risk accumulates.

Requirement Coverage Mapping

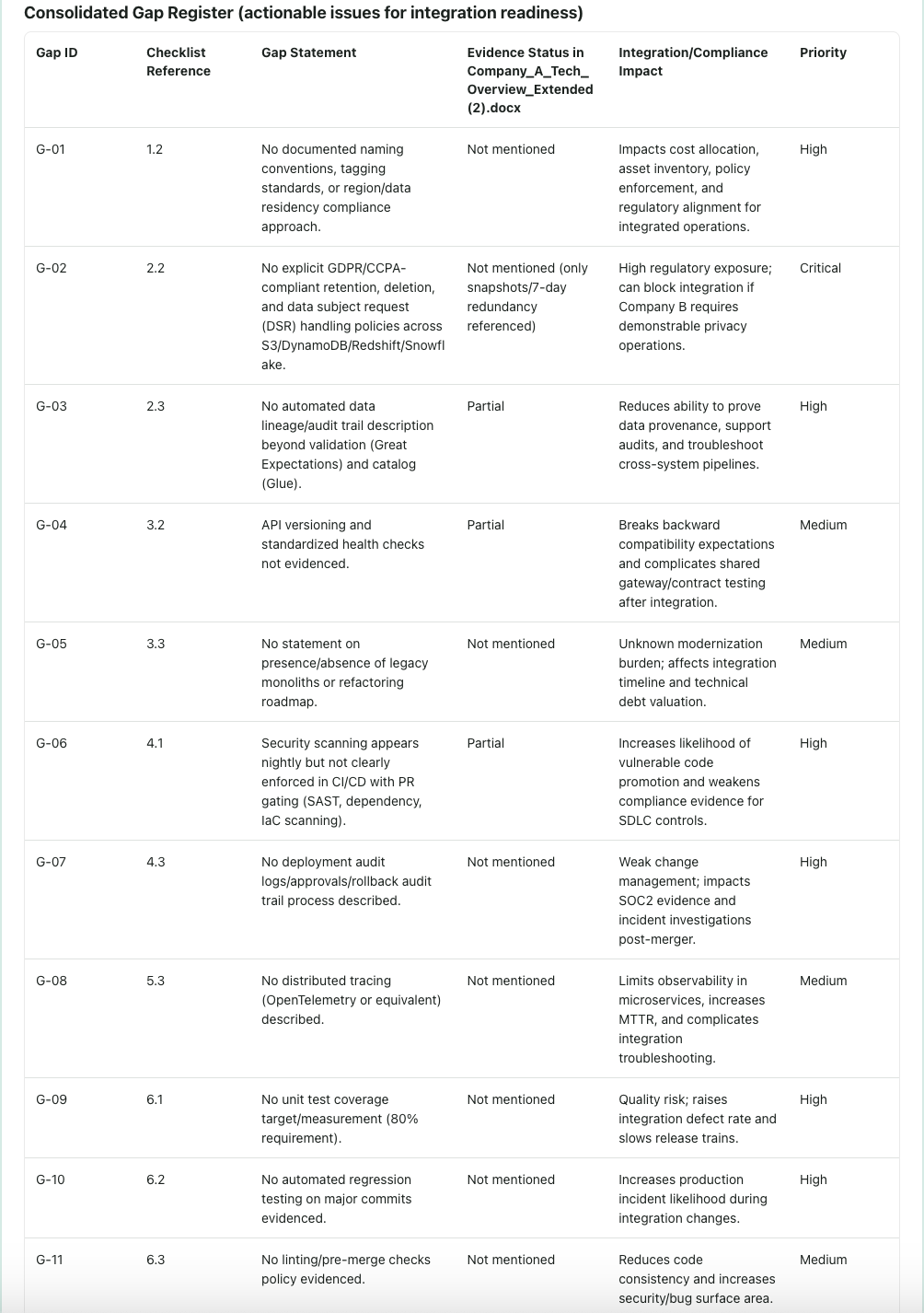

From Gaps to Integration Risk

Once gaps were consolidated, they stopped being abstract concerns and became concrete integration risks. Missing retention policies translated directly into regulatory exposure after systems were combined. Unclear TLS standards raised questions about partner compatibility and compliance attestations. Weak CI/CD enforcement created risk during integration-driven code changes. Lack of lineage and observability threatened audit ability and incident response. These risks were not theoretical. Each one mapped back to a specific missing or ambiguous statement in the documentation. That traceability is what makes the output actionable. Instead of saying “security posture unclear,” the analysis could point to exactly what evidence was missing and why it mattered. This shift, from vague concern to explicit risk, is where diligence becomes useful for decision-making.

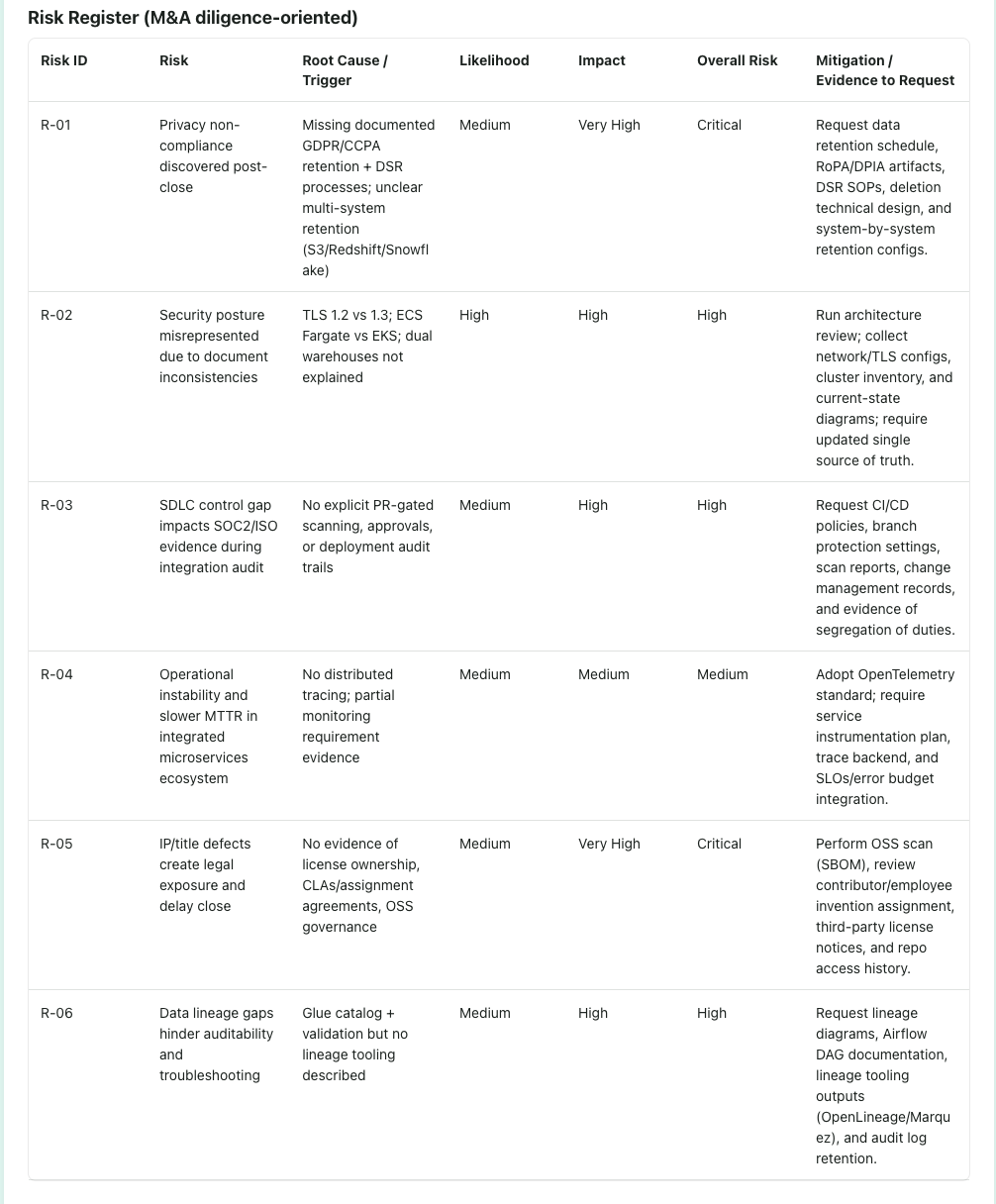

Consolidated Gap Register

Risk Framed for M&A, Not Compliance Theater

Due diligence often confuses compliance activity with risk understanding. Checklists get completed and certifications are referenced, but those signals alone don’t tell an acquiring team what will actually create friction after close. In this analysis, gaps were framed in deal terms rather than audit language. Missing retention policies translated into post-close regulatory exposure. Inconsistent TLS standards became partner and audit risk during integration. Weak CI/CD controls signaled higher failure rates during integration-driven changes. Each issue was tied to likelihood, impact, and downstream cost, not just compliance alignment. This framing matters in M&A. Acquirers don’t need more attestations they need clarity on what affects valuation, timing, and integration risk. When diligence highlights those connections, it stops being symbolic and starts supporting real decisions.

Risk Register (M&A diligence-oriented)

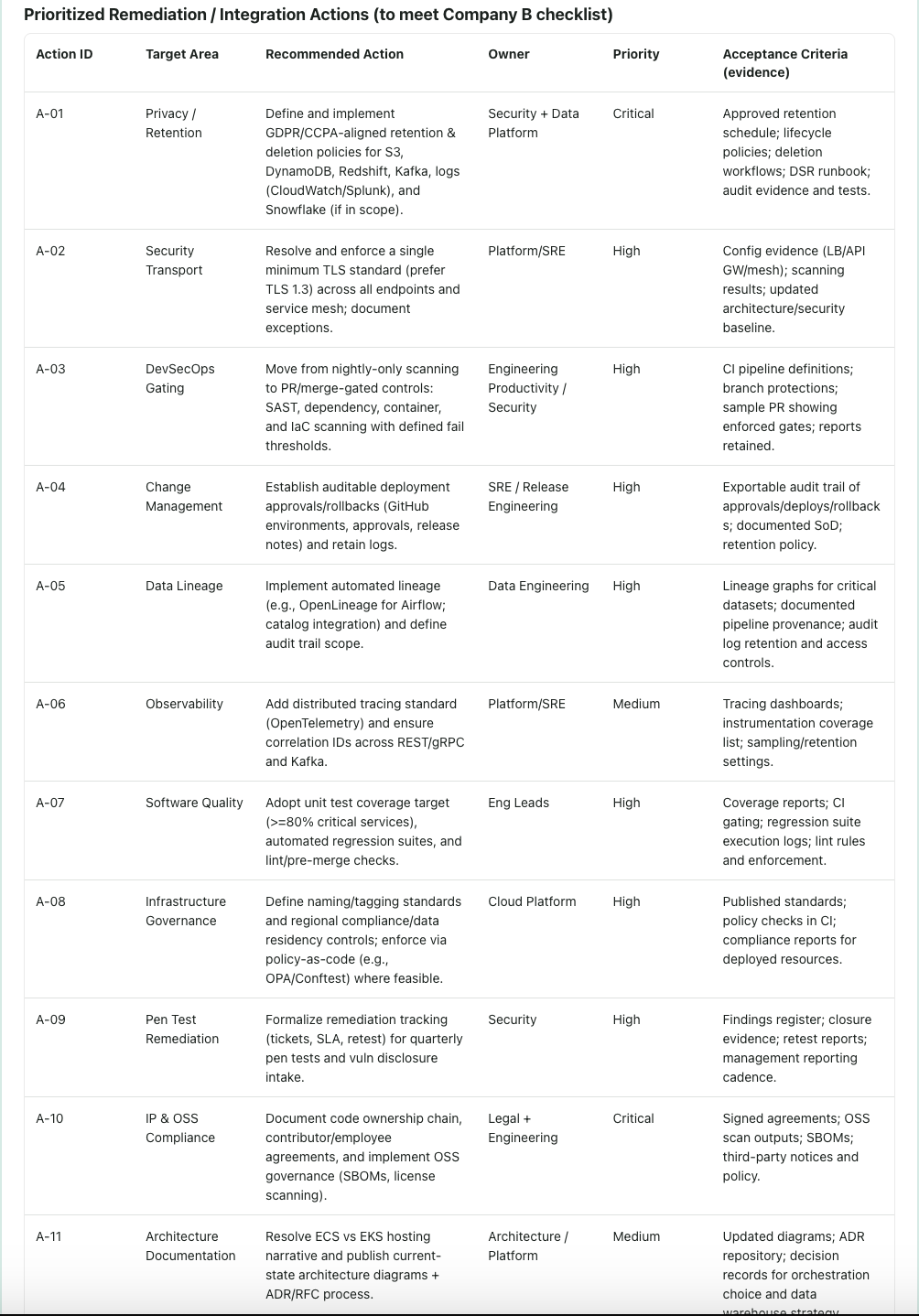

Making the Work Actionable

The final outputs of the analysis were designed to support real diligence workflows. Gaps were prioritized based on integration and compliance impact. Risks were framed in terms of likelihood and consequence. Remediation actions were tied to owners, priorities, and acceptance criteria. Equally important, the analysis produced a set of clarifying questions tied directly to checklist requirements. Instead of open-ended follow-ups, the acquiring team could ask precise, defensible questions and request specific evidence. This reduces friction. It shortens diligence cycles. And it helps both sides understand what actually needs to be addressed before close.

Prioritized Remediation / Integration Actions

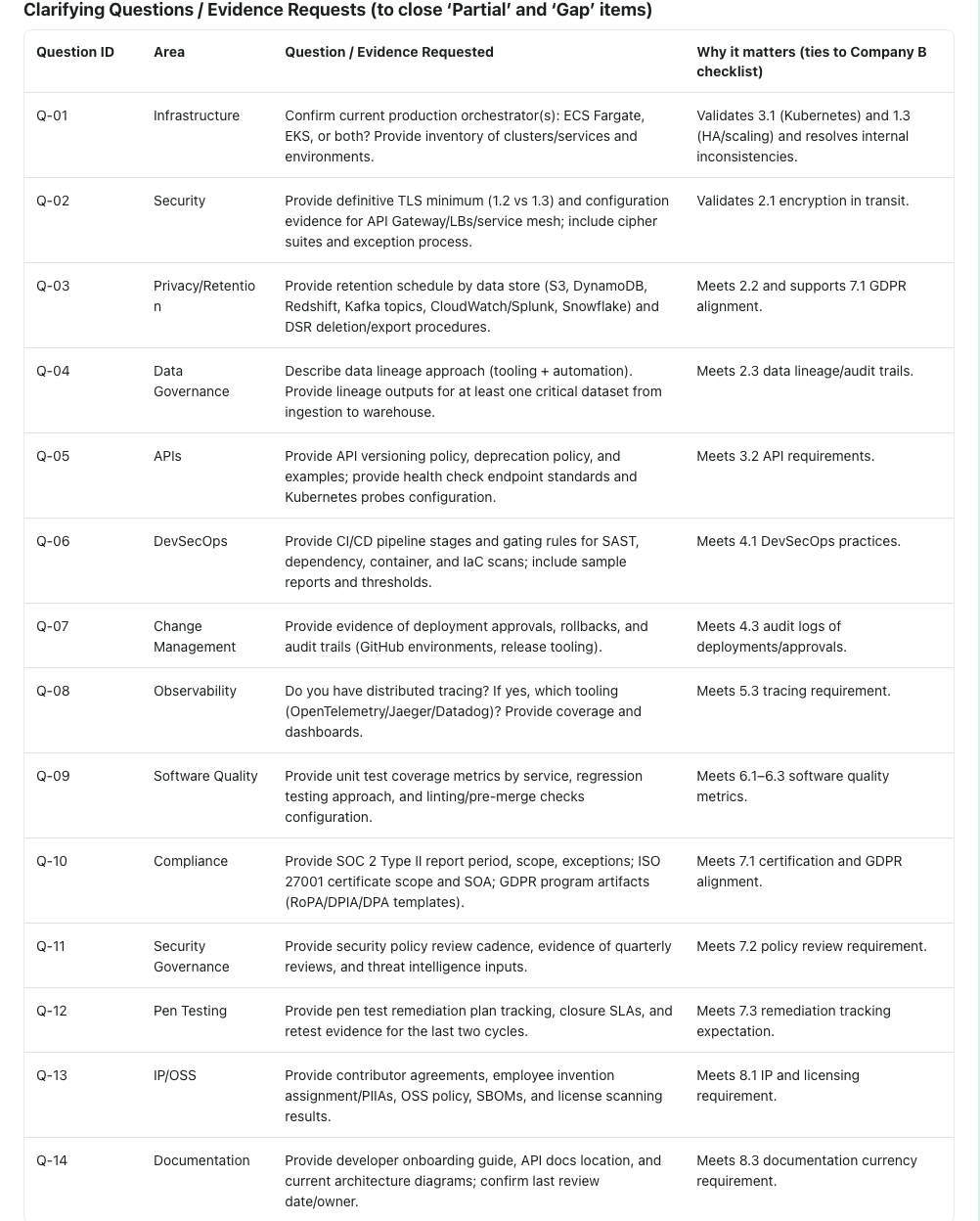

Clarifying Questions as a Diligence Output

One of the most practical outputs of the analysis wasn’t a score or a summary, but a set of targeted clarifying questions. Each question was tied directly to a specific requirement that was partially covered or ambiguous, making follow-up precise rather than speculative. Instead of broad requests like “provide more detail on security controls,” the questions focused on concrete mechanisms: how encryption standards were enforced, where retention policies were documented, how CI/CD gates were applied, and which systems acted as sources of record. This shifted diligence conversations from defensive explanations to evidence-based clarification. In an M&A context, this matters. Clear questions reduce back-and-forth, shorten diligence cycles, and lower friction between buyer and target. More importantly, they ensure that unresolved gaps are understood intentionally not discovered accidentally after close.

Clarifying Questions / Evidence Requests

Why This Scales Beyond One Deal

When gaps are identified early and tied to explicit evidence, diligence stops being a confidence exercise and becomes a planning tool. Teams can decide what must be resolved pre-close, what can be deferred, and what materially affects valuation or timing. Most diligence failures don’t come from missing documents. They come from missing connections between them. Making those connections visible changes how deals are evaluated and how surprises are avoided.

If you have questions, feedback, or want to learn more about how Riftur is used, contact us. You can also visit our home page at riftur.com to start testing the platform for your use case. Read other posts on our blog for related topics and updates on Riftur.

© 2025 Riftur — All Rights Reserved